From Basics to Beyond: Understanding Context Windows, Prompt Engineering & Advanced API Features (Explainers & Practical Tips)

Embarking on the journey from foundational concepts to advanced applications, this section delves into crucial aspects of leveraging large language models effectively. We begin by dissecting the context window – the limited 'field of view' an AI has when processing your input. Understanding its mechanics is paramount, as it directly impacts an AI's ability to recall information, maintain coherence across turns, and generate relevant responses. We'll explore practical strategies for optimizing context usage, such as summarization techniques and effective chunking of information, to ensure your prompts fit within these constraints while maximizing the AI's understanding. This fundamental grasp lays the groundwork for more sophisticated interactions, enabling you to craft clearer, more effective prompts.

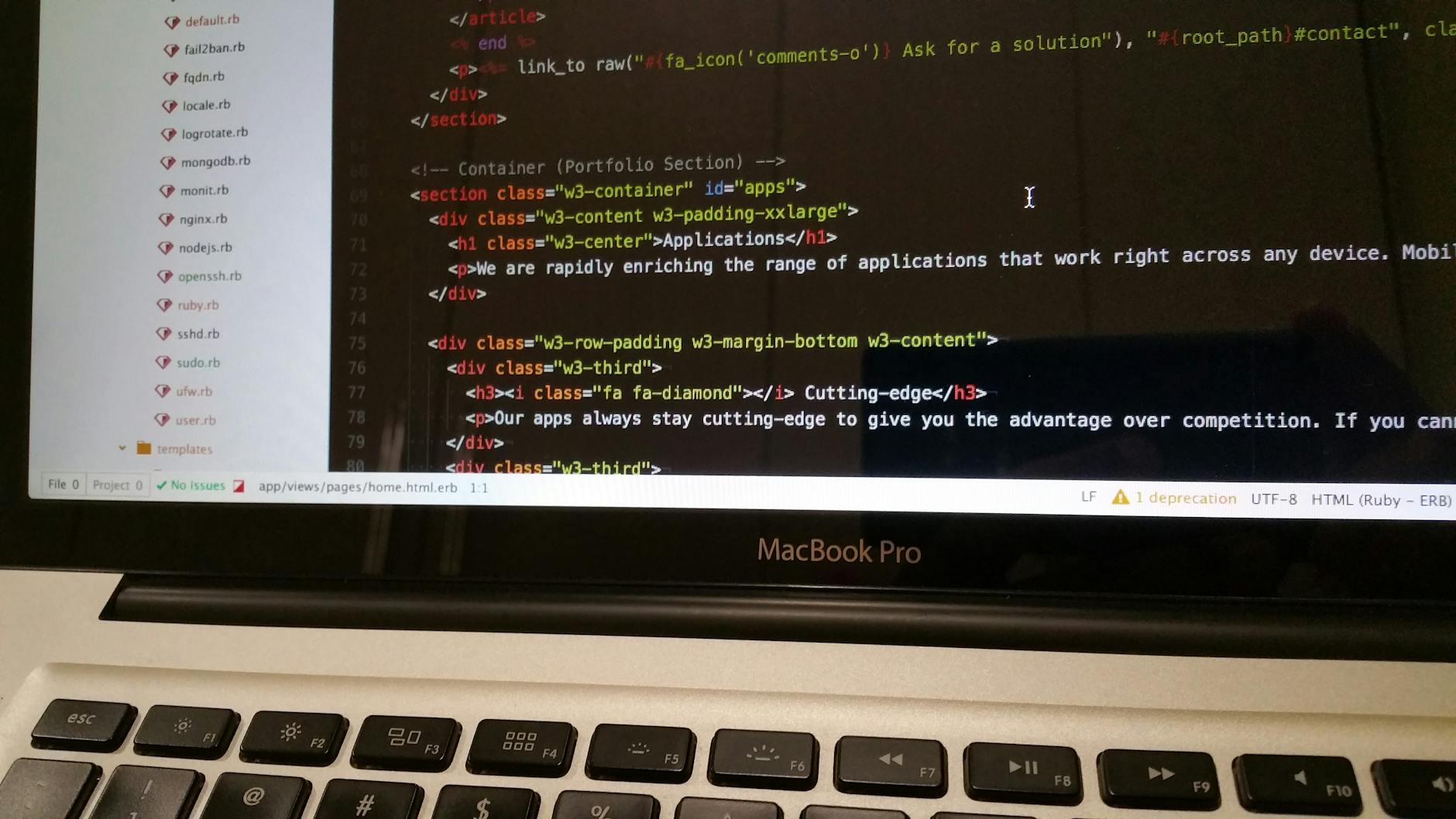

Building upon a solid understanding of context windows, we transition into the art and science of prompt engineering. This discipline involves meticulously crafting inputs to guide the AI towards desired outputs, moving beyond simple questions to complex, multi-turn dialogues. We'll provide practical tips for structuring prompts using techniques like few-shot learning, role-playing, and chain-of-thought prompting to unlock more insightful and accurate responses. Furthermore, we'll introduce you to advanced API features available from leading LLM providers. These include controlling temperature for creativity, top-p sampling for response diversity, and integrating function calling for external tool interaction. Mastering these features allows you to move beyond basic conversational AI to building powerful, integrated applications that leverage the full potential of large language models.

Anthropic continues to refine its AI offerings, with Claude Sonnet 4.6 representing a significant iteration in their "Sonnet" family of models. This version is expected to build upon the strengths of its predecessors, likely offering enhanced performance for a range of tasks, from complex reasoning to subtle conversational nuances. Developers and users eager for the latest in accessible yet powerful AI will undoubtedly be keen to explore its capabilities.

Real-World Context: Practical Applications, Troubleshooting Common Issues & Future-Proofing Your Claude-Powered AI (Practical Tips & Common Questions)

Transitioning from theoretical knowledge to practical application with Claude-powered AI solutions often brings invaluable insights. Understanding the real-world context means not just deploying your models, but actively monitoring their performance, iterating based on user feedback, and adapting to evolving business needs. For instance, a common challenge arises when the training data doesn't perfectly reflect live operational data, leading to skewed results. This is where practical troubleshooting comes in, involving techniques like error analysis, dataset augmentation, and fine-tuning specific model parameters to mitigate discrepancies. Furthermore, consider the ethical implications and potential biases in your AI's outputs. Regularly auditing your Claude deployments for fairness and transparency is a crucial practical application that builds trust and ensures responsible AI use. Ultimately, success lies in continuous learning and adaptation within your specific operational environment.

To truly future-proof your Claude-powered AI and tackle common issues head-on, adopting a proactive and adaptable strategy is paramount. This involves more than just keeping your models up-to-date; it requires building resilient systems that can handle unexpected inputs and adapt to new information. Here are a few practical tips:

- Implement robust monitoring: Track key performance indicators (KPIs) like accuracy, latency, and user satisfaction to quickly identify deviations.

- Embrace MLOps practices: Automate deployment, testing, and monitoring pipelines for greater efficiency and reliability.

- Diversify your data sources: Reduce reliance on single datasets to make your models more robust to changes and biases.

- Plan for scalability: Design your infrastructure to handle increasing user loads and data volumes without sacrificing performance.

- Stay informed on Claude updates: Regularly review Anthropic's documentation for new features, best practices, and security enhancements.

By integrating these practices, you can ensure your AI remains a valuable asset for years to come.